5.3 Fairshare

Fairshare allows historical resource utilization information to be incorporated into job feasibility and priority decisions. This feature allows site administrators to set system utilization targets for users, groups, accounts, classes, and QoS levels. Administrators can also specify the time frame over which resource utilization is evaluated in determining whether the goal is being reached. Parameters allow sites to specify the utilization metric, how historical information is aggregated, and the effect of fairshare state on scheduling behavior. You can specify fairshare targets for any credentials (such as user, group, and class) that administrators want such information to affect.

Fairshare is configured at two levels. First, at a system level, configuration is required to determine how fairshare usage information is to be collected and processed. Second, some configuration is required at the credential level to determine how this fairshare information affects particular jobs. The following are system level parameters:

| Parameter | Description |

|---|---|

| FSINTERVAL | Duration of each fairshare window. |

| FSDEPTH | Number of fairshare windows factored into current fairshare utilization. |

| FSDECAY | Decay factor applied to weighting the contribution of each fairshare window. |

| FSPOLICY | Metric to use when tracking fairshare usage. |

Credential level configuration consists of specifying fairshare utilization targets using the *CFG suite of parameters, including ACCOUNTCFG, CLASSCFG, GROUPCFG, QOSCFG, and USERCFG.

|

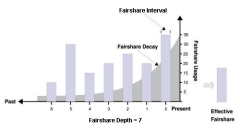

Image 5-1: Effective fairshare over 7 days |

|

|

Click to enlarge |

5.3.1.A FSPOLICY - Specifying the Metric of Consumption

As Moab runs, it records how available resources are used. Each iteration (RMPOLLINTERVAL seconds) it updates fairshare resource utilization statistics. Resource utilization is tracked in accordance with the FSPOLICY parameter allowing various aspects of resource consumption information to be measured. This parameter allows selection of both the types of resources to be tracked as well as the method of tracking. It provides the option of tracking usage by dedicated or consumed resources, where dedicated usage tracks what the scheduler assigns to the job and consumed usage tracks what the job actually uses.

| Metric | Description |

|---|---|

| DEDICATEDPES | Usage tracked by processor-equivalent seconds dedicated to each job. This is based on the total number of dedicated processor-equivalent seconds delivered in the system. Useful in dedicated and shared nodes environments. |

| DEDICATEDPS | Usage tracked by processor seconds dedicated to each job. This is based on the total number of dedicated processor seconds delivered in the system. Useful in dedicated node environments. |

| DEDICATEDPS% | Usage tracked by processor seconds dedicated to each job. This is based on the total number of dedicated processor seconds available in the system. |

| [NONE] | Disables fairshare. |

| UTILIZEDPS | Usage tracked by processor seconds used by each job. This is based on the total number of utilized processor seconds delivered in the system. Useful in shared node/SMP environments. |

Example 5-5:

An example may clarify the use of the FSPOLICY parameter. Assume a 4-processor job is running a parallel /bin/sleep for 15 minutes. It will have a dedicated fairshare usage of 1 processor-hour but a consumed fairshare usage of essentially nothing since it did not consume anything. Most often, dedicated fairshare usage is used on dedicated resource platforms while consumed tracking is used in shared SMP environments.

FSPOLICY DEDICATEDPS% FSINTERVAL 24:00:00 FSDEPTH 28 FSDECAY 0.75

5.3.1.B Specifying Fairshare Timeframe

When configuring fairshare, it is important to determine the proper timeframe that should be considered. Many sites choose to incorporate historical usage information from the last one to two weeks while others are only concerned about the events of the last few hours. The correct setting is very site dependent and usually incorporates both average job turnaround time and site mission policies.

With Moab's fairshare system, time is broken into a number of distinct fairshare windows. Sites configure the amount of time they want to consider by specifying two parameters, FSINTERVAL and FSDEPTH. The FSINTERVAL parameter specifies the duration of each window while the FSDEPTH parameter indicates the number of windows to consider. Thus, the total time evaluated by fairshare is simply FSINTERVAL * FSDEPTH.

Many sites want to limit the impact of fairshare data according to its age. The FSDECAY parameter allows this, causing the most recent fairshare data to contribute more to a credential's total fairshare usage than older data. This parameter is specified as a standard decay factor, which is applied to the fairshare data. Generally, decay factors are specified as a value between 1 and 0 where a value of 1 (the default) indicates no decay should be specified. The smaller the number, the more rapid the decay using the calculation WeightedValue = Value * <DECAY> ^ <N> where <N> is the window number. The following table shows the impact of a number of commonly used decay factors on the percentage contribution of each fairshare window.

| Decay Factor | Win0 | Win1 | Win2 | Win3 | Win4 | Win5 | Win6 | Win7 |

|---|---|---|---|---|---|---|---|---|

| 1.00 | 100% | 100% | 100% | 100% | 100% | 100% | 100% | 100% |

| 0.80 | 100% | 80% | 64% | 51% | 41% | 33% | 26% | 21% |

| 0.75 | 100% | 75% | 56% | 42% | 31% | 23% | 17% | 12% |

| 0.50 | 100% | 50% | 25% | 13% | 6% | 3% | 2% | 1% |

While selecting how the total fairshare time frame is broken up between the number and length of windows is a matter of preference, it is important to note that more windows will cause the decay factor to degrade the contribution of aged data more quickly.

5.3.1.C Managing Fairshare Data

Using the selected fairshare usage metric, Moab continues to update the current fairshare window until it reaches a fairshare window boundary, at which point it rolls the fairshare window and begins updating the new window. The information for each window is stored in its own file located in the Moab statistics directory. Each file is named FS.<EPOCHTIME>[.<PNAME>] where <EPOCHTIME> is the time the new fairshare window became active (see sample data file) and <PNAME> is only used if per-partition share trees are configured. Each window contains utilization information for each entity as well as for total usage.

Historical fairshare data is recorded in the fairshare file using the metric specified by the FSPOLICY parameter. By default, this metric is processor-seconds.

Historical fairshare data can be directly analyzed and reported using the mdiag -f -v command.

When Moab needs to determine current fairshare usage for a particular credential, it calculates a decay-weighted average of the usage information for that credential using the most recent fairshare intervals where the number of windows evaluated is controlled by the FSDEPTH parameter. For example, assume the credential of interest is user john and the following parameters are set:

FSINTERVAL 12:00:00 FSDEPTH 4 FSDECAY 0.5

Further assume that the fairshare usage intervals have the following usage amounts:

| Fairshare interval | Total user john usage | Total cluster usage |

|---|---|---|

| 0 | 60 | 110 |

| 1 | 0 | 125 |

| 2 | 10 | 100 |

| 3 | 50 | 150 |

Based on this information, the current fairshare usage for user john would be calculated as follows:

Usage = (60 * 1 + .5^1 * 0 + .5^2 * 10 + .5^3 * 50) / (110 + .5^1*125 + .5^2*100 + .5^3*150)

The current fairshare usage is relative to the actual resources delivered by the system over the timeframe evaluated, not the resources available or configured during that time.

Historical fairshare data is organized into a number of data files, each file containing the information for a length of time as specified by the FSINTERVAL parameter. Although FSDEPTH, FSINTERVAL, and FSDECAY can be freely and dynamically modified, such changes may result in unexpected fairshare status for a period of time as the fairshare data files with the old FSINTERVAL setting are rolled out.

5.3.2 Using Fairshare Information

Once the global fairshare policies have been configured, the next step involves applying resulting fairshare usage information to affect scheduling behavior. As mentioned in the Fairshare Overview, by specifying fairshare targets, site administrators can configure how fairshare information impacts scheduling behavior. The targets can be applied to user, group, account, QoS, or class credentials using the FSTARGET attribute of *CFG credential parameters. These targets allow fairshare information to affect job priority and each target can be independently selected to be one of the types documented in the following table:

| Target type - Ceiling | |

|---|---|

| Target modifier | - |

| Job impact | Priority |

| Format | Percentage Usage |

| Description | Adjusts job priority down when usage exceeds target. See How violated ceilings and floors affect fairshare-based priority for more information on how ceilings affect job priority. |

| Target type - Floor | |

|---|---|

| Target modifier | + |

| Job impact | Priority |

| Format | Percentage Usage |

| Description | Adjusts job priority up when usage falls below target. See How violated ceilings and floors affect fairshare-based priority for more information on how floors affect job priority. |

| Target type - Target | |

|---|---|

| Target modifier | N/A |

| Job impact | Priority |

| Format | Percentage Usage |

| Description | Adjusts job priority when usage does not meet target. |

Setting a fairshare target value of 0 indicates that there is no target and that the priority of jobs associated with that credential should not be affected by the credential's previous fairshare target. If you want a credential's cluster usage near 0%, set the target to a very small value, such as 0.001.

Example

The following example increases the priority of jobs belonging to user john until he reaches 16.5% of total cluster usage. All other users have priority adjusted both up and down to bring them to their target usage of 10%:

FSPOLICY DEDICATEDPS FSWEIGHT 1 FSUSERWEIGHT 100 USERCFG[john] FSTARGET=16.5+ USERCFG[DEFAULT] FSTARGET=10 ...

Where fairshare targets affect a job's priority and position in the eligible queue, fairshare caps affect a job's eligibility. Caps can be applied to users, accounts, groups, classes, and QoSs using the FSCAP attribute of *CFG credential parameters and can be configured to modify scheduling behavior. Unlike fairshare targets, if a credential reaches its fairshare cap, its jobs can no longer run and are thus removed from the eligible queue and placed in the blocked queue. In this respect, fairshare targets behave like soft limits and fairshare caps behave like hard limits. Fairshare caps can be absolute or relative as described in the following table. If no modifier is specified, the cap is interpreted as relative.

| Absolute Cap | |

|---|---|

| Cap Modifier: | ^ |

| Job Impact: | Feasibility |

| Format: | Absolute Usage |

| Description: | Constrains job eligibility as an absolute quantity measured according to the scheduler charge metric as defined by the FSPOLICY parameter |

| Relative Cap | |

|---|---|

| Cap Modifier: | % |

| Job Impact: | Feasibility |

| Format: | Percentage Usage |

| Description: | Constrains job eligibility as a percentage of total delivered cycles measured according to the scheduler charge metric as defined by the FSPOLICY parameter. |

Example

The following example constrains the marketing account to use no more than 16,500 processor seconds during any given floating one week window. At the same time, all other accounts are constrained to use no more than 10% of the total delivered processor seconds during any given one week window.

FSPOLICY DEDICATEDPS FSINTERVAL 12:00:00 FSDEPTH 14 ACCOUNTCFG[marketing] FSCAP=16500^ ACCOUNTCFG[DEFAULT] FSCAP=10 ...

5.3.2.C Priority-Based Fairshare

The most commonly used type of fairshare is priority based fairshare. In this mode, fairshare information does not affect whether a job can run, but rather only the job's priority relative to other jobs. In most cases, this is the desired behavior. Using the standard fairshare target, the priority of jobs of a particular user who has used too many resources over the specified fairshare window is lowered. Also, the standard fairshare target increases the priority of jobs that have not received enough resources.

While the standard fairshare target is the most commonly used, Moab can also specify fairshare ceilings and floors. These targets are like the default target; however, ceilings only adjust priority down when usage is too high and floors only adjust priority up when usage is too low.

Since fairshare usage information must be integrated with Moab's overall priority mechanism, it is critical that the corresponding fairshare priority weights be set. Specifically, the FSWEIGHT component weight parameter and the target type subcomponent weight (such as FSACCOUNTWEIGHT, FSCLASSWEIGHT, FSGROUPWEIGHT, FSQOSWEIGHT, and FSUSERWEIGHT) be specified.

If these weights are not set, the fairshare mechanism will be enabled but have no effect on scheduling behavior. See the Job Priority Factor Overview for more information on setting priority weights.

Example

# set relative component weighting

FSWEIGHT 1 FSUSERWEIGHT 10 FSGROUPWEIGHT 50 FSINTERVAL 12:00:00 FSDEPTH 4 FSDECAY 0.5 FSPOLICY DEDICATEDPS # all users should have a FS target of 10% USERCFG[DEFAULT] FSTARGET=10.0 # user john gets extra cycles USERCFG[john] FSTARGET=20.0 # reduce staff priority if group usage exceed 15% GROUPCFG[staff] FSTARGET=15.0- # give group orion additional priority if usage drops below 25.7% GROUPCFG[orion] FSTARGET=25.7+

Job preemption status can be adjusted based on whether the job violates a fairshare target using the ENABLEFSVIOLATIONPREEMPTION parameter.

5.3.2.D Credential-Specific Fairshare Weights

Credential-specific fairshare weights can be set using the FSWEIGHT attribute of the ACCOUNT, GROUP, and QOS credentials as in the following example:

FSWEIGHT 1000 ACCOUNTCFG[orion1] FSWEIGHT=100 ACCOUNTCFG[orion2] FSWEIGHT=200 ACCOUNTCFG[orion3] FSWEIGHT=-100 GROUPCFG[staff] FSWEIGHT=10

If specified, a per-credential fairshare weight is added to the global component fairshare weight.

The FSWEIGHT attribute is only enabled for ACCOUNT, GROUP, and QOS credentials.

5.3.2.E Fairshare Usage Scaling

Moab uses the FSSCALINGFACTOR attribute for QOS credentials to get the calculated fairshare usage of a job.

QOSCFG[qos1] FSSCALINGFACTOR=<double>

Moab will multiple the actual fairshare usage by this value to get the calculated fairshare usage of a job. The actual fairshare usage is calculated based on the FSPOLICY parameter.

For an example, if FSPOLICY is set to DEDICATEDPS and a job runs on two processors for 100 seconds then the actual fairshare usage would be 200. If the job ran on a qos with FSSCALINGFACTOR=.5 then Moab would multiply 200*.5=100. If the job ran on a partition with FSSCALINGFACTOR=2 then Moab would multiply 200*2=400.

PARCFG also lets you specify the FSSCALINGFACTOR for partitions. See 6.8.5 Per-Partition Settings.

5.3.2.F Extended Fairshare Examples

Example 5-6: Multi-Cred Cycle Distribution

Example 1 represents a university setting where different schools have access to a cluster. The Engineering department has put the most money into the cluster and therefore has greater access to the cluster. The Math, Computer Science, and Physics departments have also pooled their money into the cluster and have reduced relative access. A support group also has access to the cluster, but since they only require minimal compute time and shouldn't block the higher-paying departments, they are constrained to five percent of the cluster. At this time, users Tom and John have specific high-priority projects that need increased cycles.

#global general usage limits - negative priority jobs are considered in scheduling ENABLENEGJOBPRIORITY TRUE # site policy - no job can last longer than 8 hours USERCFG[DEFAULT] MAX.WCLIMIT=8:00:00 # Note: default user FS target only specified to apply default user-to-user balance USERCFG[DEFAULT] FSTARGET=1 # high-level fairshare config FSPOLICY DEDICATEDPS FSINTERVAL 12:00:00 FSDEPTH 32 #recycle FS every 16 days FSDECAY 0.8 #favor more recent usage info # qos config QOSCFG[inst] FSTARGET=25 QOSCFG[supp] FSTARGET=5 QOSCFG[premium] FSTARGET=70 # account config (QoS access and fstargets) # Note: user-to-account mapping handled via accounting manager # Note: FS targets are percentage of total cluster, not percentage of QOS ACCOUNTCFG[cs] QLIST=inst FSTARGET=10 ACCOUNTCFG[math] QLIST=inst FSTARGET=15 ACCOUNTCFG[phys] QLIST=supp FSTARGET=5 ACCOUNTCFG[eng] QLIST=premium FSTARGET=70 # handle per-user priority exceptions USERCFG[tom] PRIORITY=100 USERCFG[john] PRIORITY=35 # define overall job priority USERWEIGHT 10 # user exceptions # relative FS weights (Note: QOS overrides ACCOUNT which overrides USER) FSUSERWEIGHT 1 FSACCOUNTWEIGHT 10 FSQOSWEIGHT 100 # apply XFactor to balance cycle delivery by job size fairly # Note: queuetime factor also on by default (use QUEUETIMEWEIGHT to adjust) XFACTORWEIGHT 100 # enable preemption PREEMPTPOLICY REQUEUE # temporarily allow phys to preempt math ACCOUNTCFG[phys] JOBFLAGS=PREEMPTOR PRIORITY=1000 ACCOUNTCFG[math] JOBFLAGS=PREEMPTEE

5.3.3 Hierarchical Fairshare/Share Trees

Moab supports arbitrary depth hierarchical fairshare based on a share tree. In this model, users, groups, classes, and accounts can be arbitrarily organized and their usage tracked and limited. Moab extends common share tree concepts to allow mixing of credential types, enforcement of ceiling and floor style usage targets, and mixing of hierarchical fairshare state with other priority components.

The FSTREE parameter can be used to define and configure the share tree used in fairshare configuration. This parameter supports the following attributes:

Current tree configuration and monitored usage distribution is available using the mdiag -f -v commands.

5.3.3.B Controlling Tree Evaluation

Moab provides multiple policies to customize how the share tree is evaluated.

| Policy | Description |

|---|---|

| FSTREETIERMULTIPLIER | Decreases the value of sub-level usage discrepancies. It can be a positive or negative value. When positive, the parent's usage in the tree takes precedence; when negative, the child's usage takes precedence. The usage amount is not changed, only the coefficient used when calculating the value of fstree usage in priority. When using this parameter, it is recommended that you research how it changes the values in mdiag -p to determine the appropriate use. |

| FSTREECAP | Caps lower level usage factors to prevent them from exceeding upper tier discrepancies. |

Using FS Floors and Ceilings with Hierarchical Fairshare

All standard fairshare facilities including target floors, target ceilings, and target caps are supported when using hierarchical fairshare.

Multi-Partition Fairshare

Moab supports independent, per-partition hierarchical fairshare targets allowing each partition to possess independent prioritization and usage constraint settings. This is accomplished by setting the PERPARTITIONSCHEDULING attribute of the FSTREE parameter to TRUE in moab.cfg and setting partition="name" in your <fstree> leaf.

FSTREE[tree]

<fstree>

<tnode partition="slave1" name="root" type="acct" share="100" limits="MAXJOB=6">

<tnode name="accta" type="acct" share="50" limits="MAXSUBMITJOBS=2 MAXJOB=1">

<tnode name="fred" type="user" share="1" limits="MAXWC=1:00:00">

</tnode>

</tnode>

<tnode name="acctb" type="acct" share="50" limits="MAXSUBMITJOBS=4 MAXJOB=3">

<tnode name="george" type="user" share="1" >

</tnode>

</tnode>

</tnode>

<tnode partition="slave2" name="root" type="acct" share="100" limits="MAXSUBMITJOBS=6 MAXJOB=5">

<tnode name="accta" type="acct" share="50">

<tnode name="paul" type="user" share="1">

</tnode>

</tnode>

<tnode name="acctb" type="acct" share="50">

<tnode name="ringo" type="user" share="1">

</tnode>

</tnode>

</tnode>

</fstree>

If no partition is specified for a given share value, then this value is assigned to the global partition. If a partition exists for which there are no explicitly specified shares for any node, this partition will use the share distribution assigned to the global partition.

Dynamically Importing Share Tree Data

Share trees can be centrally defined within a database, flat file, information service, or other system and this information can be dynamically imported and used within Moab by setting the FSTREE parameter within the Identity Managers. This interface can be used to load current information at startup and periodically synchronize this information with the master source.

To create a fairshare tree in a separate XML file and import it into Moab

- Create a file to store your fair share tree specification. Give it a descriptive name and store it in your Moab home directory ($MOABHOMEDIR or $MOABHOMEDIR/etc). In this example, the file is called fstree.dat.

- In the first line of fstree.dat, set FSTREE[myTree] to indicate that this is a fairshare file.

- Build a tree in XML to match your needs. For example:

- Optional: Share trees defined within a flat file can be cumbersome; consider running tidy for xml to improve readability. Sample usage:

- Link the new file to Moab using the IDCFG parameter in your Moab configuration file.

- To view your fairshare tree configuration, run mdiag -f. If it is configured correctly, the tree information will appear beneath all the information about your fairshare settings configured in moab.cfg.

FSTREE[myTree]

<fstree>

<tnode name="root" share="100">

<tnode name="john" type="user" share="50" limits="MAXJOB=8 MAXPROC=24 MAXWC=01:00:00"></tnode>

<tnode name="jane" type="user" share="50" limits="MAXJOB=5"></tnode>

</tnode>

</fstree>

This configuration creates a fairshare tree in which users share a value of 100. Users john and jane share the value equally, because each has been given 50.

Because 100 is an arbitrary number, users john and jane could be assigned 10000 and 10000 respectively and still have a 50% share under the parent leaf. To keep the example simple, however, it is recommended that you use 100 as your arbirary share value and distribute the share as percentages. In this case, john and jane each have 50%.

If the users' numbers do not add up to at least the fairshare value of 100, the remaining value is shared among all users under the tree. For instance, if the tree had a value of 100, user john had a value of 50, and user jane had a value of 25, then 25% of the fairshare tree value would belong to all other users associated with the tree. By default, tree leaves do not limit who can run under them.

Each value specified in the tnode elements must be contained in quotation marks.

> tidy -i -xml mam-tiy.cfg <filename> <output file>

# Sample output

FSTREE[myTree]

<fstree>

<tnode name="root" share="100">

<tnode name="john" type="user" share="50" limits="MAXJOB=8

MAXPROC=24 MAXWC=01:00:00"></tnode>

<tnode name="jane" type="user" share="50" limits="MAXJOB=5">

</tnode>

</tnode>

</fstree>

IDCFG[myTree] server="FILE:///$MOABHOMEDIR/etc/fstree.dat" REFRESHPERIOD=INFINITY

Moab imports the myTree fairshare tree from the fstree.dat file. Setting REFRESHPERIOD to INFINITY causes Moab to read the file each time it starts or restarts, but setting a positive interval (e.g. 4:00:00) cause Moab to read the file more often. See Refreshing Identity Manager Data for more information.

> mdiag -f

Share Tree Overview for partition 'ALL'

Name Usage Target (FSFACTOR)

---- ----- ------ ------------

root 100.00 100.00 of 100.00 (node: 1171.81) (0.00)

- john 16.44 50.00 of 100.00 (user: 192.65) (302.04) MAXJOB=8 MAXPROC=24 MAXWC=3600

- jane 83.56 50.00 of 100.00 (user: 979.16) (-302.04) MAXJOB=5

The settings you configured in fstree.dat appear in the output. The tree of 100 is shared equally between users john and jane.

Specifying Share Tree Based Limits

Limits can be specified on internal nodes of the share tree using standard credential limit semantics. The following credential usage limits are valid:

- MAXIJOB (Maximum number of idle jobs allowed for the credential)

- MAXJOB

- MAXMEM

- MAXNODE

- MAXPROC

- MAXSUBMITJOBS

- MAXWC

Example 5-7: FSTREE limits example

FSTREE[myTree]

<fstree>

<tnode name="root" share="100">

<tnode name="john" type="user" share="50" limits="MAXJOB=8

MAXPROC=24 MAXWC=01:00:00"></tnode>

<tnode name="jane" type="user" share="50" limits="MAXJOB=5">

</tnode>

</tnode>

</fstree>

Other Uses of Share Trees

If a share tree is defined, it can be used for purposes beyond fairshare, including organizing general usage and performance statistics for reporting purposes (see showstats -T), enforcement of tree node based usage limits, and specification of resource access policies.

Related Topics