|

You are here: Appendices > Appendix C: Moab Workload Manager for Grids > Resource Control and Access

|

|

Resource Control and Access |

|

|

You are here: Appendices > Appendix C: Moab Workload Manager for Grids > Resource Control and Access

|

|

Resource Control and Access |

|

In a Moab peer-to-peer grid, resources can be viewed in one of two models:

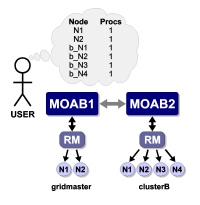

Direct node import is the default resource information mode. No additional configuration is required to enable this mode.

In this mode, nodes are reported just as they appear locally by the exporting cluster. However, on the importing cluster side, Moab maps the specified node names using the resource manager object map. In an object map, node mapping is specified using the node keyword as in the following example:

SCHEDCFG[gridmaster] MODE=NORMAL RMCFG[clusterB] TYPE=moab OMAP=file://$HOME/clusterb.omap.dat ...

node:b_*,*

In this example, all nodes reported by clusterB have the string 'b_' prepended to prevent node name space conflicts with nodes from other clusters. For example, if cluster clusterB reported the nodes node01, node02, and node03, cluster gridmaster would report them as b_node01, b_node02, and b_node03.

See object mapping for more information on creating an object map file.

Queue information and access can be managed directly using the RMLIST attribute. This attribute can contain either a comma delimited list of resource managers which can view the queue or, if specified with a '!' (exclamation point) character, a list of resource managers which cannot view or access the queue. The example below highlights the use of RMLIST.

# every resource manager other than chemgrid and biogrid # may view/utilize the 'batch' queue CLASSCFG[batch] RMLIST=!chemgrid,!biogrid # only the local resource manager, pbs2, can view/utilize the staff queue CLASSCFG[staff] RMLIST=pbs2 ...

|

If more advanced queue access/visibility management is required, consider using the resource manager object map feature. |

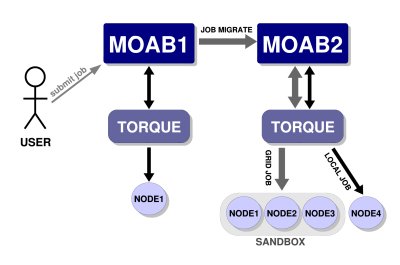

A cluster may wish to participate in a grid but may desire to dedicate only a set amount of resources to external grid workload or may only want certain peers to have access to particular sets of resources. With Moab, this can be achieved by way of a grid sandbox which must be configured at the destination cluster. Grid sandboxes can both constrain external resource access and limit which resources are reported to other peers. This allows a cluster to only report a defined subset of its total resources to source peers and restricts peer workload to the sandbox. The sandbox can be set aside for peer use exclusively, or can allow local workload to also run inside of it. Through the use of multiple, possibly overlapping grid sandboxes, a site may fully control resource availability on a per peer basis.

A grid sandbox is created by configuring a standing reservation on a destination peer and then specifying the ALLOWGRID flag on that reservation. This flag tells the Moab destination peer to treat the standing reservation as a grid sandbox, and, by default, only the resources in the sandbox are visible to grid peers. Also, the sandbox only allows workload from other peers to run on the contained resources.

Example 1: Dedicated Grid Sandbox

SRCFG[sandbox1] PERIOD=INFINITY HOSTLIST=node01,node02,node03 SRCFG[sandbox1] CLUSTERLIST=ALL FLAGS=ALLOWGRID ...

In the above example, the standing reservation sandbox1creates a grid sandbox which always exists and contains the nodes node01, node02, and node03. This sandbox will only allow grid workload to run within it by default. This means that the scheduler will not consider the boxed resources for local workload.

Grid sandboxes inherit all of the same power and flexibility that standing reservations have. See Managing Reservations for additional information.

|

The flag ALLOWGRID marks the reservation as a grid sandbox and as such, it precludes grid jobs from running anywhere else. However, it does not enable access to the reserved resources. The CLUSTERLIST attribute in the above example enables access to all remote jobs. |

Often clusters may wish to control which peers are allowed to use certain sandboxes. For example, Cluster A may have a special contract with Cluster B and will let overflow workload from Cluster B run on 60% of its resources. A third peer in the grid, Cluster C, doesn't have the same contractual agreement, and is only allowed 10% of Cluster A at any given time. Thus two separate sandboxes must be made to accommodate the different policies.

SRCFG[sandbox1] PERIOD=INFINITY HOSTLIST=node01,node02,node03,node04,node05 SRCFG[sandbox1] FLAGS=ALLOWGRID CLUSTERLIST=ClusterB SRCFG[sandbox2] PERIOD=INFINITY HOSTLIST=node06 FLAGS=ALLOWGRID SRCFG[sandbox2] CLUSTERLIST=ClusterB,ClusterC,ClusterD USERLIST=ALL ...

The above sample configuration illustrates how cluster A could set up their sandboxes to follow a more complicated policy. In this policy, sandbox1provides exclusive access to nodes 1 through 5 to jobs coming from peer ClusterB by including CLUSTERLIST=ClusterB in the definition. Reservation sandbox2provides shared access to node6 to local jobs and to jobs from clusters B, C, and D through use of the CLUSTERLIST and USERLIST attributes.

With this setup, the following policies are enforced:

As shown in the example above, sandboxes can be shared across multiple peers by listing all sharing peers in the CLUSTERLIST attribute (comma delimited).

It is not always desirable to have the grid sandbox reserve resources for grid consumption, exclusively. Many clusters may want to use the grid sandbox when local workload is high and demand from the grid is relatively low. Clusters may also wish to further restrict what kind of grid workload can run in a sandbox. This fine-grained control can be achieved by attaching access control lists (ACLs) to grid sandboxes.

Since sandboxes are basically special standing reservations, the syntax and rules for specifying an ACL is identical to those found in Managing Reservations.

Example

SRCFG[sandbox2] PERIOD=INFINITY HOSTLIST=node04,node05,node06 SRCFG[sandbox2] FLAGS=ALLOWGRID QOSLIST=high GROUPLIST=engineer ...

In the example above, a cluster decides to dedicate resources to a sandbox, but wishes local workload to also run within it. An additional ACL is then associated with the definition. The reservation 'sandbox2', takes advantage of this feature by allowing local jobs running with a QOS of 'high', or under the group 'engineer', to also run on the sandboxed nodes node04, node05, and node06.

Copyright © 2012 Adaptive Computing Enterprises, Inc.®