HPC Cloud On-Demand Data Center

HPC Cloud On-Demand Data Center™

For Intelligent Cloud Systems Management

Adaptive Computing’s HPC Cloud On-Demand Data Center™ (ODDC) is a scalable cloud systems management solution that provides an easy way to spin up temporary or persistent HPC cloud infrastructure resources quickly, inexpensively, and on-demand. It gives organizations the ability to leverage Cloud Service Provider resources, without vendor lock-in to any single CSP.

This enterprise-grade platform can be used to automatically deploy and build clusters in the Cloud, automatically run applications on those clusters, and then terminate the cloud resources, assuring that you only pay for what is being used.

CLOUD KNOWLEDGE IS NOT REQUIRED. FIRE OFF JOBS FROM YOUR LAPTOP OR CREATE AND MANAGE A CLUSTER RIGHT FROM YOUR IPAD…ON-DEMAND.

How It Works

Overview

Adaptive Computing’s HPC Cloud On-Demand Data Center™ (ODDC) is a scalable cloud systems

management solution that gives organizations the ability to leverage public Cloud Service

Provider (CSP) resources, without vendor lock-in to any single CSP.

The HPC Cloud On-Demand Data Center is used to spin up temporary or persistent data

center infrastructure resources quickly, inexpensively, and on-demand. This enterprise-grade

solution can be used to automatically deploy and build clusters in the Cloud, automatically run

applications on those clusters, and then terminate the cloud resources, assuring that you only

pay for what is being used.

The HPC Cloud On-Demand Data Center provides ways to run HPC workloads in the Cloud as an abstraction layer on top of CSP management consoles. Deploying cloud-hosted resources on any of the major Cloud Service Providers becomes much easier than working directly through a CSP console because cloud access is preconfigured and built into the user-friendly interface GUI (and CLI) of the HPC Cloud On-Demand Data Center.

This preconfigured CSP access eliminates the complexities of running workloads in the Cloud for users without cloud expertise. OCI, AWS, Google Cloud, Azure, and OTC are available through the intuitive interface, making HPC in the Cloud available to non-technical users.

- OCI, AWS, Azure, Google Cloud, and OTC are preconfigured and built in to the GUI (and CLI) with deploymentready access

- Control cloud costs by automatically shutting down nodes when not in use (Automated deployment and release of nodes)

- Comprehensive management across the following environments:

• Public Cloud

• Private Cloud

• Corporate Cloud

• Containers

• Virtualized

• Edge - Spin up an unlimited number of nodes in the same amount of time as it takes to spin up one

- Configure stacks, run test jobs, run custom jobs, run jobs on any major cloud provider, and view job output from a single interface

- Shared Clusters: Collaborate and share clusters across multiple users with only one set of cloud credentials

- Enhanced file management and job output

- Access specialized resources such as GPUs and large instance sizes

- Move across clouds easily and switch between them

- Multi-cloud: dynamically expand your on-premise cluster to any Cloud Service Provider

- Works with any job scheduler or without a workload scheduler

- Manage homogeneous and heterogeneous clusters

- Cloud bursting configurations bring the fastest time to results at the lowest possible cost

- Admins can set up user accounts allowing for cloud cost control and access control

- Flexible pricing and licensing models

- Using the ODDC destroy cluster command assures there are no orphan artifacts left running on the Cloud Service Provider(s)

- Teams can automatically deploy and build clusters in the cloud, automatically run applications on those clusters, and then terminate the cloud resources on a daily, weekly, or even hourly basis

- Reduce your costs by spreading your tech infrastructure across multiple cloud providers and/or on-premise infrastructure based on cost of delivery

- Optimize productivity by taking advantage of automation

- Improve management by providing controls for one-off projects with contractors

- Provide a single point of control for provisioning and deprovisioning infrastructure resources

- Extend your on-premise resources to the cloud to meet peak demand or eliminate backlog

- Create new HPC clusters on any CSP

- Reduce the costs of allocating temporary resources or making additional hardware purchases

- Get true scalability and elasticity

- Increase the capacity of your on-premise data center, access advanced computing power, and gain virtually unlimited capacity

- Prevent CSP vendor lock-in

- Solve cloud migration challenges; highly flexible and customizable

- Intelligently manage cloud resources so that they can be used cost-effectively and efficiently

- Increase productivity and accelerate time to results while reducing Cap Ex costs

- Scalability and the immediate availability of resources; instantly launch or scale up infrastructure

- Highly flexible and customizable

- Users without technical knowledge can set up temporary or persistent cloud resources quickly

- Schedule and orchestrate both HPC and Enterprise workloads

- Save frequently used job scripts

to quickly reuse when needed

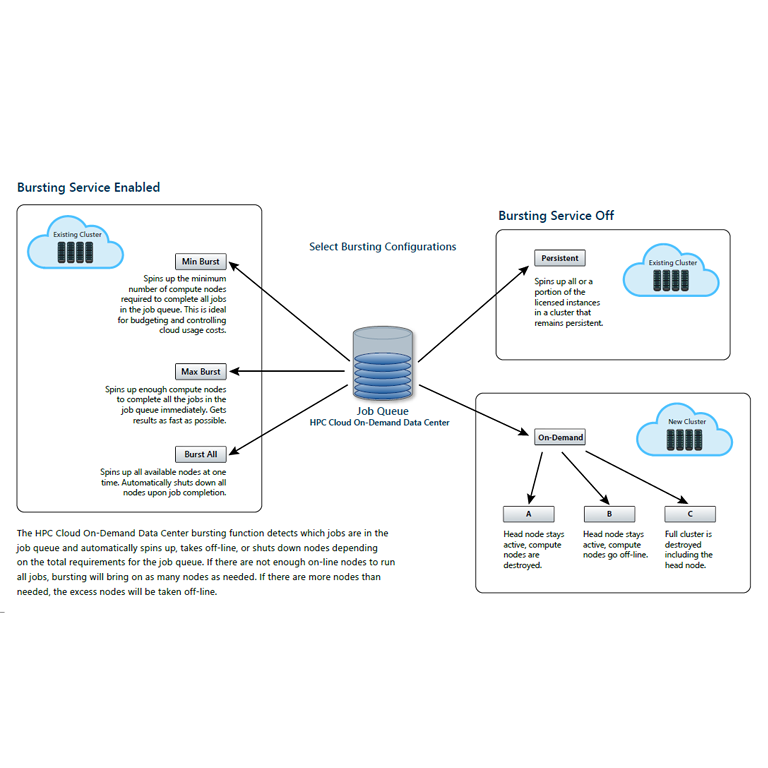

cloud bursting

The ODDC enables on-premise systems to ‘burst’ workload backlog to an external public cloud when resources are not sufficient to accommodate peaks in demand. All required workload resources are automatically deployed as needed. When the workload backlog has completed, the cloud resources are automatically deprovisioned from the cloud provider.

This added flexibility enables admins to expand their own cluster and dynamically utilize the scalability of the cloud. The ODDC includes all the necessary tools to facilitate ‘bursting’ workloads and applications to the cloud to extend on-premise resources. Cloud Bursting can be set up to deploy applications dynamically or on-demand.

deliver hybrid it or a pure cloud solution

Balance workloads between on-premise and cloud infrastructures. Deliver Hybrid IT by spreading your tech infrastructure across different cloud providers and on-premise infrastructure.

As a Hybrid Solution: Run your on-premise workload backlog in the Cloud using the ODDC. Organizations can achieve a true ‘hybrid cloud’ experience and expand their on-premise resources by ‘bursting’ their workload backlog to the cloud, eliminating long wait times in job queues and providing a better end-user experience.

As a Pure Cloud Solution: Run multiple application types (including new requirements, like AI requirements) in the Cloud using the ODDC.

AUTO-DEPLOY CI/CD Pipelines

The ODDC improves CI/CD by enabling automation at any part of the pipeline and can be enabled quickly to handle a new pipeline with ease. Developers can deploy SDLC toolchain combinations using the ODDC framework and deploy different toolchain combinations.

cost-effective automation testing

The ODDC framework allows developers to test on a large variety of high-performance machines and environments saving organizations time and money by not using expensive resources in-house for test environments. When large development teams test, having dedicated resources in continually refreshed cloud environments is a competitive advantage. The ODDC shuts down active cloud resources when not in use, preventing escalating and unnecessary cloud costs. When large teams of developers are using cloud resources for testing, this can add up to a significant cost savings.

automated Infrastructure Provisioning

Application deployment and Portability

Composable Infrastructure

With the ODDC, users can select infrastructure resources in the Cloud on a case-by-case basis to meet specific workload requirements. Select custom infrastructure components such as CPUs, GPUs, size of memory, storage and type of network. Choosing these components separately allows for unlimited infrastructure configuration options. This is ideal for matching cloud resources to specific requirements of certain workloads.

Stacks and Deployment Images

The ODDC permits users to define all stack components that allow applications to be run in the Cloud. The ODDC takes those definitions and automatically builds the deployment image needed to run the workload in the cloud. The ODDC allows the same job script to be used on premise and in the cloud.